Research Overview

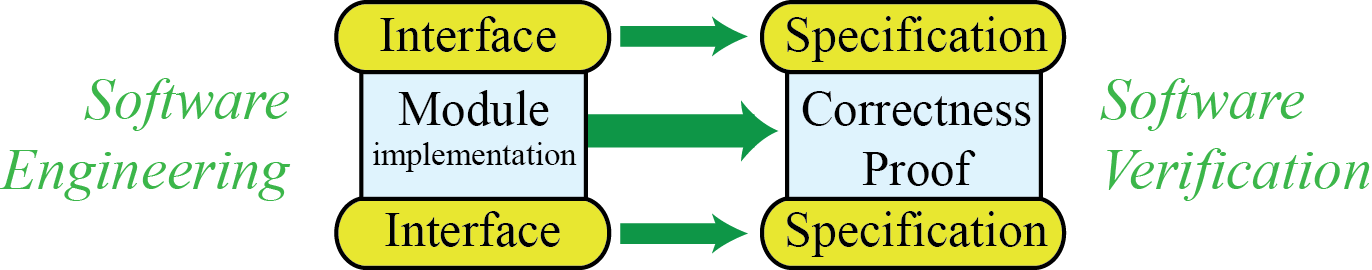

In software engineering, a module exports an interface (or “API”, Application Programmer Interface), and is itself the client of lower-level interfaces. In software verification, we write a specification corresponding to each interface, and then we can prove the module correct with respect to its specification:

In software engineering, interfaces are often written in programming languages: C language header files with function prototypes, or Java language class declarations. These can describe the types of the interface functions, but cannot specify their behavior. Modeling languages such as UML can do a bit better, but are still not rich enough to fully characterize what we want the module to do for us.

Program verification logics can use the full expressiveness of formal logic to characterize behavior, but (historically) they have not been "live:" they have been about models of the program, not connected directly and mechanically to the program itself. (In contrast, the compiler’s type-checker makes sure, every time you compile, that types in your API spec match the types in the implementation.)

Using formal logic is good, because then we can verify programs using automated provers and proof-checkers, to guarantee that module implementations satisfy their specifications.

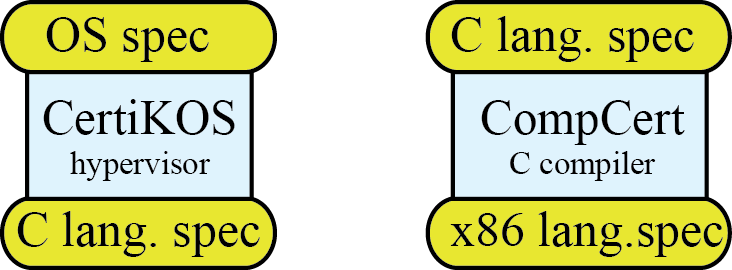

But there’s another important consideration: is the specification two-sided? Has it been exercised from both above and below? Let us explain. Our research is in the specification and verification of substantial components such as operating-system kernels, compilers, and program analysis tools. For example, CertiKOS is a hypervisor kernel, implemented in C. It should be proved correct with respect to the specification of an operating system (properties such as process isolation and fair scheduling). But this proof is a client of the specification of the C programming language; without that formal semantics, we cannot reason about the behavior of the code. Meanwhile, CompCert is a C compiler, proved correct with respect to the formal semantics of the C language, and also the formal semantics of the machine language that it emits:

What we often find in practice (for example) is that the C language spec used in proving CertiKOS does not match the C language spec delivered by CompCert. CompCert’s C spec was designed and exercised in proving CompCert correct, and (from the client’s point of view) can be underspecified or overrestrictive. CertiKOS may demand features from the spec that CompCert does not provide. Only when we put these modules together will we really exercise the C language spec in a two-sided way, and from that we learn how to improve and strengthen the spec.

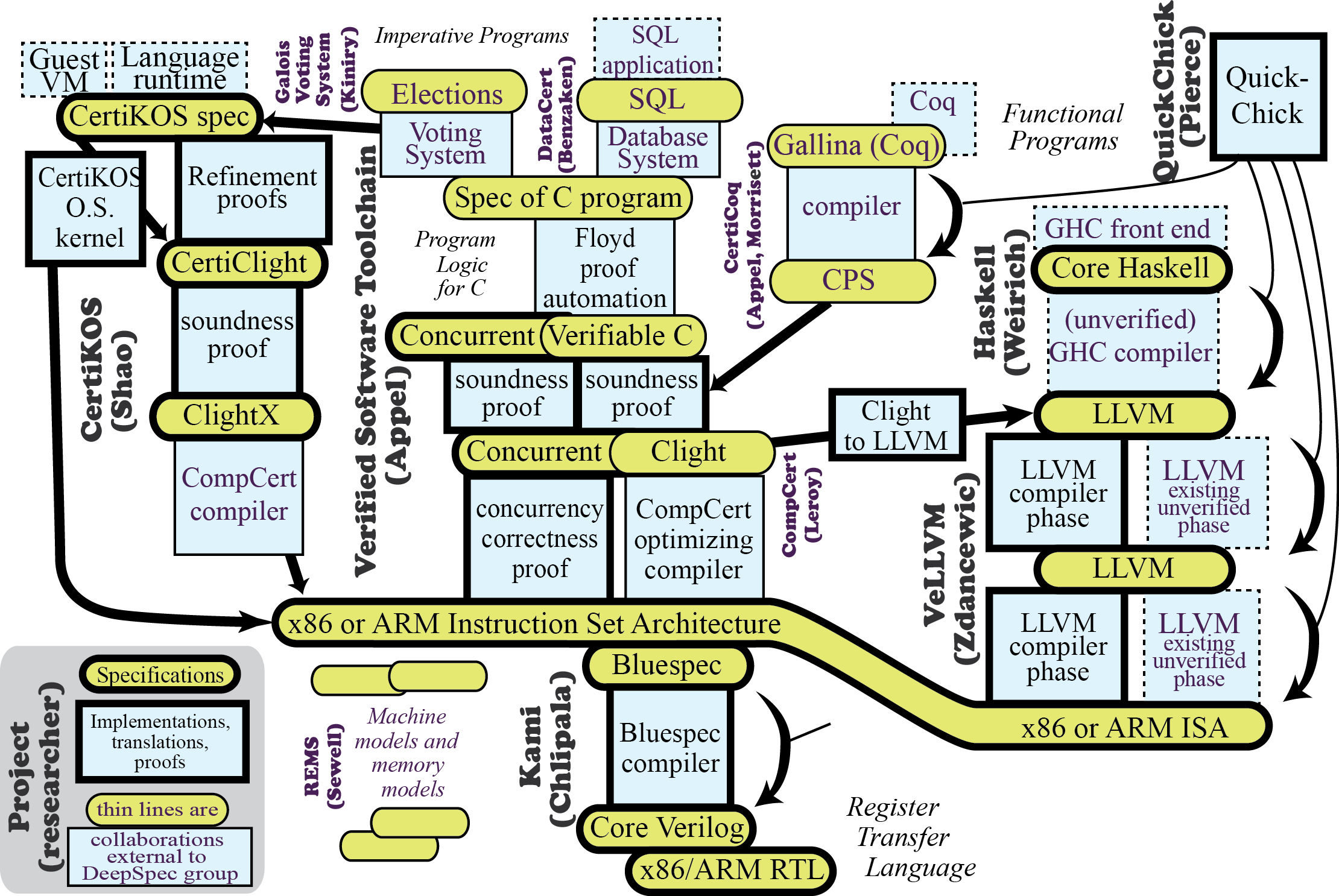

The investigators in the DeepSpec team each had major research projects underway. (You can browse them in the sidebar at left!) Each of these projects imports some specifications, and exports others. The specifications are rich, live, and formal. But only by connecting them together can we ensure that the specs will be two-sided. Therefore, we envision this network of verified software components, connected by deep specifications—specifications that are rich, live, formal, and two-sided.

A Network of Specifications

General insights must begin with experience specifying and implementing particular systems. The core goal of this Expedition is to create a set of interrelated specifications---and artifacts connecting them---drawn from the security-critical ``middle layers'' of software systems.

The diagram shows this network of deep specifications and the artifacts (implementations, translations, or proofs) connecting them, as we envision it at the end of the Expedition. Large vertical labels on the different regions of the diagram indicate which PI will take main responsibility for them. Components labeled in purple are by external collaborators.

At the top of the diagram are specifications of application-level interfaces such as the semantics of the SQL query language or the rules governing fair Elections. At the bottom is the semantics of the RTL (register-transfer language) implementation of some specific microprocessor, say x86. A voting or database system is implemented as a C program with a specification Spec of C program, written in a program logic called Verifiable C. We prove (in Coq) that the C program correctly implements SQL by applying the program logic to the program, with the assistance of the Floyd proof automation system. Verifiable-C is a set of reasoning rules for program correctness; whatever properties are proved about a program will actually hold when the program runs; this is formalized in Coq as the soundness proof connecting Verifiable C to the operational semantics of C-light. The CompCert optimizing compiler compiles C light to machine language in ARM, PowerPC, or x86-ISA. The correctness of CompCert's translation is proved in Coq. Finally, the hardware is correct: the instruction set architecture x86-ISA is specified in the Bluespec hardware-description language, which itself has a deep specification defining its precise semantics. The Kami synthesizer translates this to register transfers written in Verilog (which, again, itself has a deep specification).

Of course, user-level applications don't run on the bare machine---an operating system is needed. Bugs and vulnerabilities in the OS can compromise the system, as can misunderstandings of the OS-client interface. Thus we also need specification and verification at this level. CertiKOS is a formally verified hypervisor kernel, with respect to a specification CertiKOS-spec. It is proved correct using refinement proofs in the CertiClight program logic; this is proved sound with respect to a variant of the C-light language compiled by CompCert. Thus it connects end-to-end through x86-ISA to x86-RTL.

LLVM is a 21st-century industry-standard compiler intermediate representation and toolset. It has a deep specification LLVM, together with proofs of correctness of critical LLVM compiler phases with respect to this specification. The CompCert C-light front end is connected to the LLVM back end using a verified Clight to LLVM translator.

Haskell is a widely used functional programming language. The Glasgow Haskell Compiler (GHC) translates into a high-level internal language, which we will deeply specify as Core-Haskell GHC translates Core Haskell to LLVM.

An alternative to Haskell, for functional programming, is the Gallina language embedded within Coq's own logic. Gallina will connect, via a verified toolchain, through a continuation-passing style CPS intermediate representation, to CompCert C-light.

The end result is an x86-RTL circuit, a binary representation of the instructions for the operating system and user code, and an end-to-end proof that executing these instructions on this circuit will produce the behavior described by a user-level specification (e.g., SQL, Elections, Gallina, Haskell programs, etc).

Solid-line boxes in the diagram represent formal verifications. But even the unverified (dashed-line) boxes can be given significantly higher assurance with property-based random testing based on deep specifications. Rigorous random testing can also be used to develop and debug components and specifications that are ultimately intended for formal verification, elsewhere in the diagram. This is the job of the QuickChick tool.

Some of the specifications and connecting components in the diagram already exist as individual point efforts. To achieve the aspiration of end-to-end connected verification as outlined in the figure, we must close significant specification gaps between them. This requires research, and in most cases, research breakthroughs. Indeed, the specification of C-light is arguably the only truly deep specification in the whole diagram, because it has been vigorously exercised from both above (Verifiable C soundness proof) and below (CompCert correctness proof). But even Clight's spec needs work on its handling of concurrency and distributed programming. Likewise, the current CertiKOS-spec has not yet been significantly exercised from above; the x86-ISA spec has not been exercised from below; the LLVM spec is not modular enough to cope with the extensibility of industrial LLVM; the current Bluespec and Verilog specs are just demonstration prototypes for small subsets; and the Core-Haskell spec is not yet begun.

The existing specifications of CertiClight and Verifiable C are close to being deep, individually, but there are significant gaps between them. A major goal of this Expedition is to unify the refinement-based CertiClight approach to program verification with the separation-logic-based Verifiable C. Another goal is to extend the Verifiable C program logic to allow reasoning about concurrent and distributed programs.

It would be a major breakthrough to build the coherent system of specified/verified components that we have in mind. The specific verified artifacts are chosen to implement interfaces that apply broadly outside the context of the Expedition, such as C and x86 assembly. However, the greatest pay-off will come in elucidating the conceptual basis of deep specifications as a technique for abstraction and modularity, with the potential for equal or greater practical benefit as from ideas like data abstraction. And the benefits increase with scale. Concerning internal specifications such as the C-light language definition, one does not have to wonder, ``did I specify the right behavior?''---since the end-to-end proof about the SQL system is proved w.r.t. the SQL and x86-RTL specifications. We will study the pragmatics of building a complete system whose exposed specification deals only with, say, the semantics of Verilog and SQL, guaranteeing sound treatment of all intermediate implementations and verifications.